Opened 9 years ago

Closed 9 years ago

#2009 closed defect (fixed)

PostgreSQL seems to be loading entire result set into memory

| Reported by: | Nicklas Nordborg | Owned by: | Nicklas Nordborg |

|---|---|---|---|

| Priority: | critical | Milestone: | BASE 3.9 |

| Component: | core | Version: | |

| Keywords: | Cc: |

Description (last modified by )

This was investigated for MySQL a long time ago and resulted in setting some parameters on the connection string (useCursorFetch=true&defaultFetchSize=100&useServerPrepStmts=true, see #953).

I guess the corresponding investigations were never made for PostgreSQL because it seems like the JDBC driver is loading the entire resultset into memory (this was seen when testing the Reggie release exporter http://baseplugins.thep.lu.se/ticket/887).

We need to investigate and make sure that PostgreSQL is using server-side cursors for streaming the result.

Attachments (2)

Change History (10)

comment:1 by , 9 years ago

| Description: | modified (diff) |

|---|

by , 9 years ago

| Attachment: | loading-142-out-of-memory.png added |

|---|

comment:2 by , 9 years ago

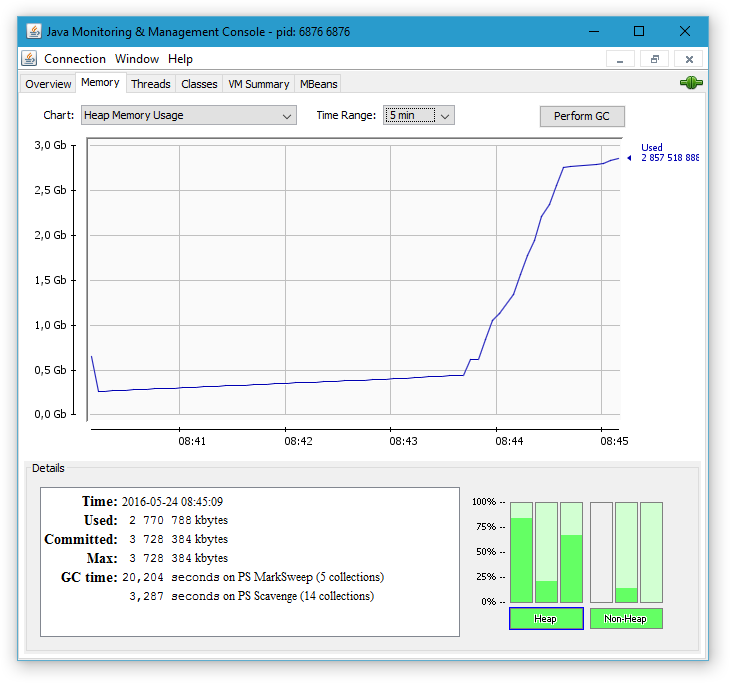

The test case is now a list with 142 raw bioassays containing 100k+ raw data entries each. Using the new DynamicRawDataQuery implemented in #2004 to load the data results in query that runs for a few minutes. Then, the memory usage increases very steeply until 100% CPU is used for garbage collection and the server hangs.

comment:3 by , 9 years ago

The main suspect is that the JDBC fetch size is not set. The default value of 0 means that the PostgreSQL JDBC driver will try to load the entire result into memory: https://jdbc.postgresql.org/documentation/head/connect.html (see the defaultRowFetchSize option a bit down on the page).

Trying to set this option in the db.url setting in base.config unfortunately seems to have no effect.

comment:4 by , 9 years ago

It should be possible to create the setting hibernate.jdbc.fetch_size in hibernate.cfg.xml (http://docs.jboss.org/hibernate/orm/5.0/userguide/html_single/Hibernate_User_Guide.html#configurations-database-fetch).

However, it seems like it is only used internally by Hibernate and that it is not applied to SQL statements that we use for the *dynamic* part were we bypass Hibernate (not so hard to guess that!).

by , 9 years ago

| Attachment: | loading-142-by-100.png added |

|---|

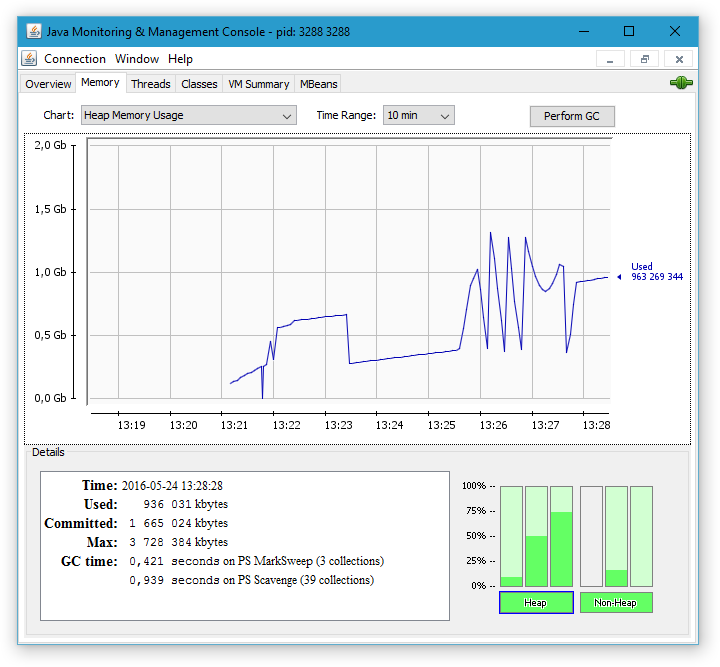

Load 142 x 100k raw data entries in batches by 100

comment:5 by , 9 years ago

Using the JDBC API to programmatically set the fetch size to 100. About the same time as before to execute the query (13:22:00-13:25:30). The next two minutes is loading/processing the raw data and writing it to the file. Memory usage is going up and down as expected. The important thing is that the "Old generation" (=left bar in the "Heap" section) is not filling up at all, which means that the memory is used only by temporary objects that can be garbage collected as soon as a data row has been processed. The total time for the exporter was less than 6 minutes.

comment:6 by , 9 years ago

(In [7156]) References #2009: PostgreSQL seems to be loading entire result set into memory

Adding jdbc.fetch_size configuration property to hibernate.cfg.xml. Hopefully Hibernate will pick it up wherever possible.

We are also picking it up in the BASE core API and use the same setting for queries were we bypass Hibernate.

It is verified to work for the raw data query in #2004 and http://baseplugins.thep.lu.se/ticket/887

comment:7 by , 9 years ago

| Owner: | changed from to |

|---|---|

| Status: | new → assigned |

Trying to load 142 x 100k raw data entries